Prompting GPT is hard - here’s what I learned building a creativity training app using the ChatGPT API

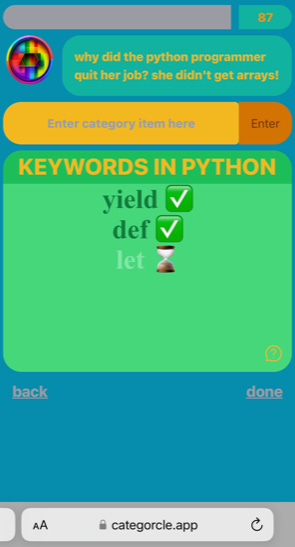

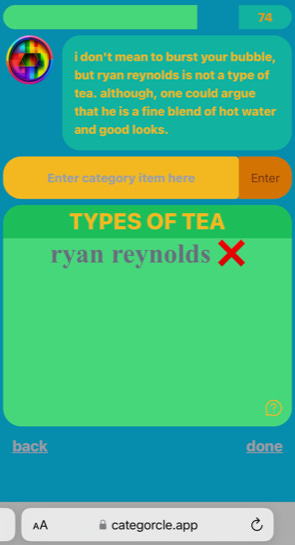

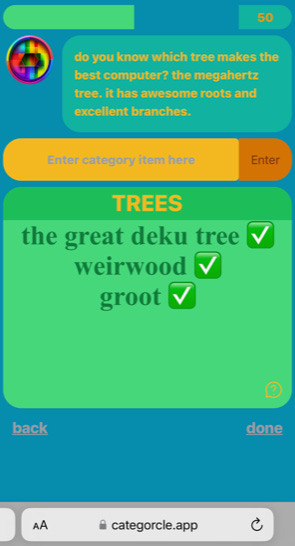

(Screenshot from Categorcle, a toy app that uses GPT behind the scenes to train word-finding ability in aphasia patients, and creativity in everyone else!)

Creative Applications

Excited to test the limits of this new technology for real-time logic, I decided to build Categorcle, a toy web app that trains your creativity. The game is akin to Scattergories; the player is given a category (e.g. “superpowers”), and must think of and submit items (e.g. “invisibility”, “super strength”). Months ago, I had an idea to build a game like this because I thought it could be useful for treating aphasia patients by training word finding ability. When the ChatGPT API was released, it decreased the cost of intelligence by 90% (over text-davinci-003), and made an application like this viable. There were a lot of things that were exciting about this:

Instead of requiring a preset list of valid words, and a finite number of categories, I could instead ask GPT if what the user wrote belongs to the category.

Instead of having preset categories, the user could enter any category they want (if they so choose).

Answers wouldn’t need to be checked literally. Typos or tip of the tongue answers would be resolved as correct.

Another feature I thought would be interesting is if the AI could make sarcastic comments about your answers or your performance. This turned out really well; the AI is able to produce some good jokes!

Interestingly, I found that it’s actually more fun to read what the AI is saying than it is to play the game itself — and the timer is counting down while this happens, so it’s just not a great format for it. I also found that the AI is more comical if you intentionally give it wrong answers:

Practical Prompting Principles

Along the way of developing the prompts for this game, I learned a few lessons / principles about prompt engineering that I think could be valuable to others. That said, these are purely results of trial and error and intuition — I didn’t rigorously test them in a controlled manner, but I have found them to be useful guidelines in other prompts I have worked on since. Much of this may be obvious to the reader, but it’s useful to have it be concrete.

One-shot or zero-shot prompts often surprisingly work better than explicit, verbose instructions

Here’s the first attempt I made at the main prompt for the game:

The following is a game where the user attempts to generate items from a given category. Given a category and a user submission,

determine if the user made a small typo. If they are off by only one or two characters, then count it as a "yes".

In the "belongs-in-category:" section, write either "yes" or "no". Do not write anything other than "yes", or "no".

Here is an example:

category: "aquatic animals"

user-submission: "dolphin"

belongs-in-category: "yes"

Here is another example, where the user made a small typo:

category: "countries in europe"

user-submission: "franch"

belongs-in-category: "yes"

Here is another example, where the user was incorrect:

category: "countries in europe"

user-submission: "paris"

belongs-in-category: "no"

Please determine if the user made a typo and determine whether or not the user's submission belongs in the category for the following:

category: "CATEGORY"

user-submission: "SUBMISSION"

belongs-in-category:

Note the verbose explanation at the beginning, followed by several examples each trying to showcase different intended behaviors. This prompt didn’t work very well, in part due to locality issues explained below, but also a lack of precision of language. Compare this to the succinct prompt below:

category: "aquatic animals"

user-submission: "dolphin"

belongs-in-category: "yes"

category: "CATEGORY"

user-submission: "SUBMISSION"

belongs-in-category:

This worked much better.

To anthropomorphize matrix multiplication, it seems that providing verbose instructions may have actually been confusing. Perhaps if my instructions were more precise, the first prompt might have worked — but this is both cheaper and faster!

The overused cliques seem to be applicable here: less is more. Keep it simple, stupid.

I thought I would take this a step further, and tried the following zero shot prompt:

Does "SUBMISSION" belong to the category "CATEGORY"? Answer only with "yes" or "no".

Answer:

This prompt worked with high accuracy for many categories. But on certain categories, it completely fails. It seems that the examples are necessary to show exactly what human intuitions are surrounding the concept of “belonging to a category”. For example, consider the category: “trees”. Do we mean types of trees (oak, pine)? Do we mean proper noun, notable trees (https://en.wikipedia.org/wiki/List_of_individual_trees)? Do we allow fictional trees (Weirwoods, The Great Deku Tree)? Does Groot count?

API latency requires you to bundle prompts

There’s quite a bit of demand for the cheapest API option, gpt-3.5-turbo. The median latency I have observed from the ChatGPT API on some days is ~16 seconds, which is certainly not ideal. The app first asks whether or not the user submitted item belongs in the given category, but also asks whether or not this item is just a duplicate of anything the user already submitted. This way, you can’t write “apples” and “apple slices” even though both of those might be considered valid independently. Based on this information, it writes a sarcastic comment or joke. This depends on the answer of the first two prompts — the comment should be different based on whether they got it right or if they attempted to write something too similar.

If you need to execute multiple prompts that depend on the output of prior prompts, then you have two options:

Send separate requests in series, multiplying the latency

Send one request with multiple questions

The second option is much faster, and will be cheaper if your prompts have overhead tokens at the beginning to explain the context of your prompt.

But there’s one quite large issue with doing this:

Simplicity Matters

If it’s practical, split your prompt into multiple different prompts that each solve simper subproblems.

A useful intuition for this might be that in a single forward pass (per-token prediction), GPT only has a limited capacity to calculate. The attention mechanism allows GPT to focus on different parts of your prompt at different times, so in theory it can effectively utilize this capacity during different independent parts of the solution. One might think that since problems are related or share overlapping subproblems themselves, addressing them together might give GPT greater context on both, or bias the solution productively.

This might be true for some problems if you are able to chain-of-thought prompt it to write intermediate answers to overlapping subproblems. But this might be difficult to pull off in general even if possible. As I describe below, CoT isn’t applicable everywhere, and wasn’t applicable here.

Chain of thought prompting doesn’t help with “system 1” type problems

In a single forward pass (per-token prediction), GPT only has a limited capacity to calculate. One way that has been identified to make up for this is Chain-of-Thought (CoT) prompting. By asking GPT to verbosely think on the problem first, you increase the number of forward passes where it is able to make calculations that might contribute to the final answer.

This method can be extremely useful when the problem requires solving subproblems, or if there are an explicit list of steps that could be written out to follow, or if there are implicit assumptions that could instead be made explicit by stating them. In other words, this is useful for problems that require “system 2” thinking — ones where you can describe how you go the answer.

But there are other problems in the realm of “system 1”. The hallmark of this type of thinking is that you can’t quite describe what it is that you are doing to produce an answer. Instead, it’s like you are summoning the answer from within yourself, and it just… appears. It’s more like a lookup table than an algorithm that could be debugged. An example of this is reading text. When you look at a street sign, the present word simply jumps into your mind. There’s no algorithm that you have to consciously be aware of in order to recognize the text.

For Categorcle, we are asking GPT whether or not some item belongs to a category. If I were to ask, “does the item ‘maple syrup’ belong to the category ‘pancake toppings’”, is there an algorithm you could follow to answer it?

When I tried CoT prompting GPT for these types of questions, it almost universally did not help. In some cases, it even degraded performance. The types of thoughts it spit out were akin to “maple syrup is commonly put on waffles and pancakes, so it belongs in the category”. There isn’t any intermediate problem being solved here, or any value added to later forward passes by this type of thought.

Locality matters

If possible, position your questions closely to where the answer is expected. If you are writing a prompt with multiple questions, you might be tempted to write questions in order, and show GPT you expect answers similarly, like below:

(role: user)

{

"question 1": "Can `dolphin` be thought of as example of, a type of, or a member of the category `aquatic animals`?",

"question 2": "Does `dolphin` represent the same underlying object or concept as `turtle`, `minnow`, or `whale`?",

}

(role: assistant)

{

"answer 1": "yes",

"answer 2": "no",

}

As the prompts grow with the number of questions, the probability of forgetting or exhibiting confusion about instructions grows significantly. When using more advanced models, this is less of an issue, but still worth being aware of. Prompting the model to rewrite the questions allows for better locality, and reduces the frequency of confusion, by letting the model simply fill in the blanks.

(role: user)

{

"question 1": "Can `dolphin` be thought of as example of, a type of, or a member of the category `aquatic animals`?",

"answer 1": "",

"question 2": "Does `dolphin` represent the same underlying object or concept as `turtle`, `minnow`, or `whale`?",

"answer 2": "",

}

(role: assistant)

{

"question 1": "Can `dolphin` be thought of as example of, a type of, or a member of the category `aquatic animals`?",

"answer 1": "yes",

"question 2": "Does `dolphin` represent the same underlying object or concept as `turtle`, `minnow`, or `whale`?",

"answer 2": "no",

}

Theory and practice

While large language models have incredible potential, they are not magic solutions in most practical cases. The path to generating high-quality results is often much more complex and time-consuming than what is presented in short demonstrations. However, with effective prompting, it is more possible than ever before to build apps with creative, personalized, or improvisational components. We are extremely early — I suspect than in short order, we will see GPT or other LLMs tuned for usability in backend logic, or specific conventions for backend logic. Maybe a fine-tuned model won’t even be necessary if system prompts are able to live up to their full potential.